You already know what SERP data is and why it matters. I am not here to explain basics. I am here to help you think clearly about how to choose the right approach and avoid tools that create more problems than they solve.

I have worked around search data long enough to see patterns. The tools that look simple often fail at scale. The tools that look complex often waste time. The right option sits in the middle and focuses on reliability, accuracy, and control.

When I evaluate options, I look at stability, data accuracy, geo control, and how much effort it takes to keep things running. That is the lens I am using here.

Early in any SERP data setup, access matters. A reliable serp scraper api gives you consistent results without constant blocks. Many teams also test a serp api free option first to validate structure, response format, and speed before scaling usage.

This guide walks you through how to think about SERP scrapers, tracking APIs, and ranking APIs, and why some platforms stand out when consistency matters.

Why SERP Data Quality Matters More Than Volume

Raw volume does not help if the data fails checks.

I see teams pull large datasets that look impressive but fail basic validation. Rankings shift. Locations mismatch. Pages disappear. This usually happens because the scraping layer breaks under pressure.

SERP data needs to reflect real search conditions.

That means:

- Correct location simulation

- Clean IP reputation

- Stable session handling

- Accurate parsing of search features

If those fail, the insights fail.

A SERP scraper must behave like a real user search. Anything else creates noise.

Understanding SERP Scraper and SERP Scraper API Differences

A basic SERP scraper often runs as a script. It sends requests, rotates IPs, and hopes results come back clean.

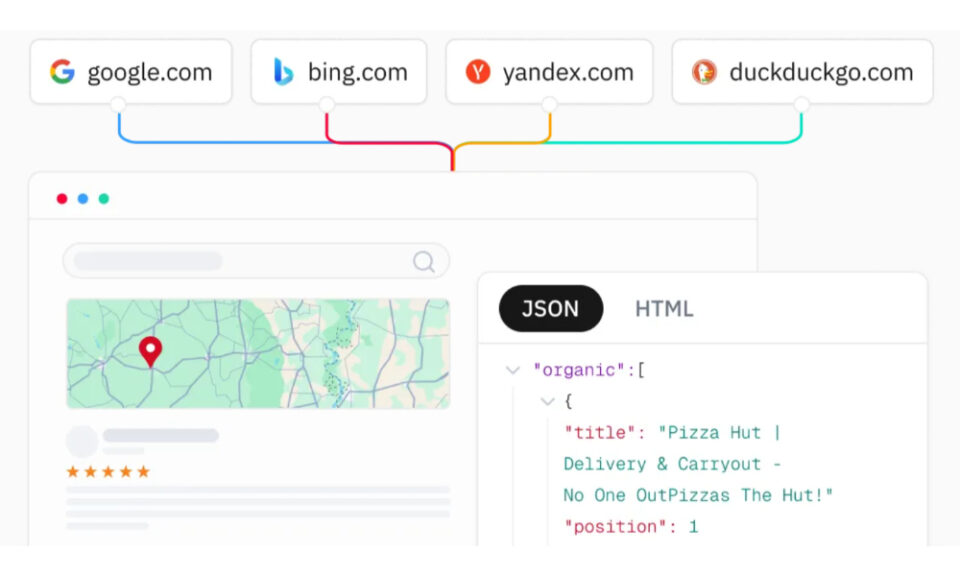

A SERP scraper API handles the hard parts for you.

Here is how I separate them.

A scraper script requires you to manage:

- Proxies

- Headers

- Rotation logic

- CAPTCHA handling

- HTML parsing

A SERP scraper API abstracts that work.

You send a request. You receive structured results.

That difference saves time and reduces failure points.

SERP Tracking API Versus SERP Rank API

These terms overlap, but intent matters.

A SERP tracking API focuses on monitoring changes over time. You care about position movement, volatility, and consistency.

A SERP rank API focuses on snapshot accuracy. You want to know where a keyword ranks right now under specific conditions.

Strong platforms support both without forcing separate systems.

You should expect:

- Daily or scheduled queries

- Location specific ranking

- Device simulation

- Stable result formats

Without those, long term tracking becomes unreliable.

What Defines the Best SERP API

I judge quality based on behavior, not marketing.

The best SERP API should:

- Return results consistently across regions

- Handle Google search features correctly

- Support large query volumes without drops

- Maintain uptime without manual retries

It should also support structured output. JSON results save time and reduce errors.

Another factor that matters is pricing clarity. Pay for success models align incentives correctly. You should not pay for failed requests.

Why Free SERP APIs Need Careful Testing

Free access helps with early validation.

I recommend using a serp api free option to test:

- Response format

- Speed

- Error handling

- Location accuracy

Free tiers often limit volume or features. That is fine. What matters is whether the core engine behaves correctly.

Once you scale, limits appear fast. That is where paid infrastructure becomes necessary.

Where Thordata Fits Into SERP Data Collection

When comparing platforms, infrastructure depth matters.

Thordata operates a large global proxy network with millions of ethically sourced IPs. That matters for SERP data because search engines detect patterns quickly.

They support precise targeting by country, state, city, and ASN. This allows SERP results to reflect real local conditions.

Their SERP API retrieves results with accurate geolocation simulation and fast response times. That reduces rank mismatches and location drift.

They also handle JavaScript rendering and anti bot defenses. This lowers block rates and keeps success rates high.

The output comes structured, which reduces parsing errors and saves development time.

From a system design perspective, this approach reduces moving parts and failure points.

Stability and Uptime Impact Long Term Tracking

Tracking rankings over time requires consistency.

If uptime fluctuates, gaps appear in datasets. Those gaps break trend analysis.

Thordata maintains high uptime and focuses on routing efficiency. That matters when running scheduled SERP tracking at scale.

They also support automatic rotation and session control. This helps avoid detection while keeping result accuracy intact.

Choosing a SERP API That Scales With You

I suggest thinking in stages.

Early stage teams need validation and low friction testing.

Growth stage teams need scale, speed, and accuracy.

Enterprise teams need compliance, support, and predictable performance.

Thordata supports this progression. Their platform design supports small tests and large scale usage without architectural changes.

They also provide documentation and multi language examples. That reduces onboarding friction.

Final Thoughts on SERP Scraping and APIs

SERP data drives decisions. Bad data leads to wrong conclusions.

I recommend focusing less on feature lists and more on behavior under load. Look at consistency, location accuracy, and how much work the platform removes from your plate.

A reliable SERP scraper API simplifies workflows. A solid SERP tracking API keeps insights clean. The best SERP API does both without friction.

If you approach this with clarity and discipline, your data becomes an asset instead of a liability.